Westwell, in collaboration with a research team led by Professor Guang Chen of Tongji University and Shanghai Innovation Institute (SII), has published new research in Nature Communications introducing a latency-aware evaluation framework designed to improve real-time perception in autonomous systems.

In high-speed, dynamic scenarios such as autonomous driving and embodied intelligence in smart logistics, the responsiveness of visual perception systems is a decisive factor in ensuring safety and reliability in real-world deployment.

The study, titled “Bridging the latency gap with a continuous stream evaluation framework in event-driven perception,” presents STARE (STream-based lAtency-awaRe Evaluation) — a framework that addresses a critical but often overlooked challenge in real-world AI deployment: perception latency.

Westwell and Tongji University jointly published a new research in Nature Communications

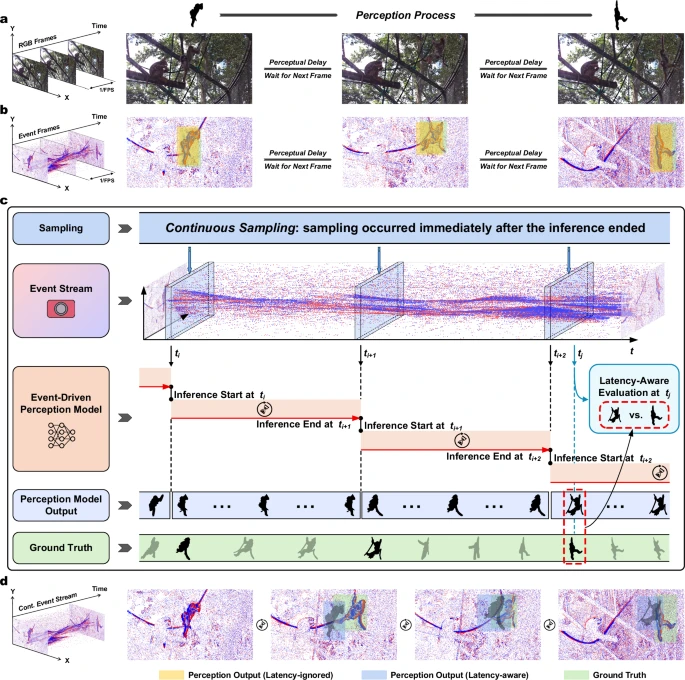

Traditional vision systems rely on frame-based cameras that capture images at fixed intervals, introducing inherent temporal discontinuities. In fast-changing environments such as autonomous driving, such delays can lead to outdated predictions, limiting a system's ability to respond to sudden events. To mitigate this, industry deployments often depend on multi-sensor fusion to improve redundancy and accuracy.

Event cameras offer an alternative. Inspired by biological vision, these neuromorphic sensors asynchronously encode pixel-level brightness changes at microsecond resolution, generating continuous event streams rather than discrete frames. This enables more efficient and responsive perception, particularly in high-speed scenarios.

Despite these advantages, current evaluation methodologies remain rooted in frame-based paradigms. Continuous event streams are typically converted into fixed-rate event frames, and model performance is assessed under the assumption of instantaneous computation.

This overlooks a critical factor: perception latency—the time elapsed between an event occurring and the corresponding model output reaching downstream systems. In real-world applications, even small delays can result in outdated perception results and compounding errors, making latency a key bottleneck for deployment.

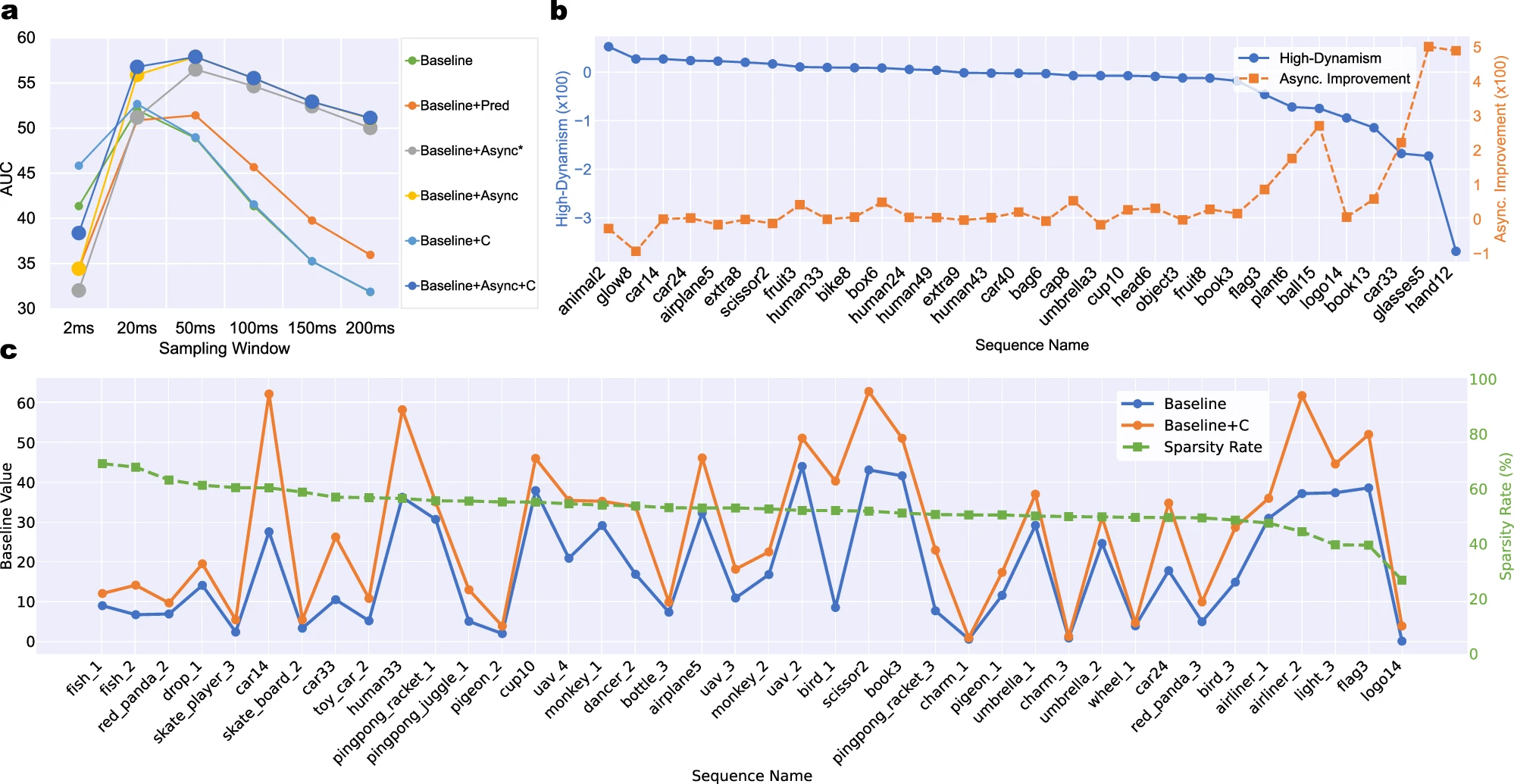

To close this gap, the joint research team introduced the STream-based lAtency-awaRe Evaluation (STARE) framework, built on two core components. First, Continuous Sampling schedules the model to immediately process the latest events right after the previous processing cycle, maximizing model throughput for real-time use. Second, Latency-Aware Evaluation incorporates latency directly into accuracy metrics, quantifying how delayed outputs degrade real-time performance.

STream-based lAtency-awaRe Evaluation (STARE) framework

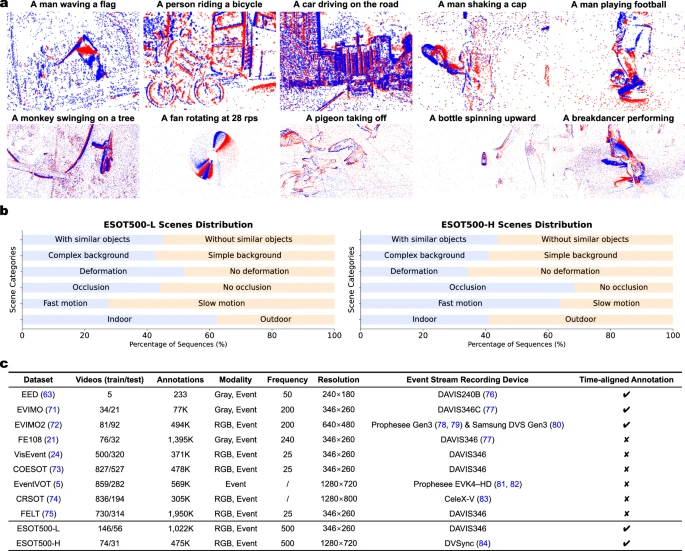

To validate the framework, the team developed a new event dataset with high-frequency annotations: ESOT500, a 500 Hz object tracking dataset in which each annotation simulates a real-world downstream query. Experimental results show perception latency can reduce online accuracy by more than 50%, highlighting a substantial mismatch between conventional benchmarks and real deployment scenarios.

The ESOT500 dataset for high-dynamic event-driven perception

Based on these findings, the team further proposed two model enhancement strategies, providing a practical pathway for the real-world deployment of real-time perception systems.

-Asynchronous Tracking: A dual-architecture approach combining a high-accuracy base model with a lightweight residual model for rapid updates. This increases model throughput by 78% and improves latency-aware accuracy by up to 60%.

-Context-Aware Sampling: Dynamically adjusts model input and activation based on event density around the target, maintaining robust performance in sparse and complex scenarios. This approach achieves more than 51% performance improvement under challenging scenarios.

Quantitative evaluation of model enhancement strategies under STARE

Moving forward, the STARE framework also opens new directions for research in event-driven perception, including hardware-software co-design, real-time robotic control, and learning-based event sampling.

By emphasizing temporal alignment between sensing, computation and application requirements, the study provides a pathway toward unlocking the full potential of neuromorphic vision systems in real-world environments.

This research represents an important step in Westwell's broader efforts to advance real-time perception technologies, supporting its long-term roadmap for future applications across large-scale logistics scenarios including seaports, airports, dry ports, railway hubs and factories.

As an AI and autonomous driving technology company, Westwell has deployed its self-developed autonomous heavy-duty transport vehicles and intelligent logistics solutions in multiple global projects, gaining extensive real-world experience.

Westwell will continue to collaborate with leading academic and research institutions to translate cutting-edge research into scalable industrial applications, supporting the digital and sustainable transformation of the global logistics industry.

Full paper: https://www.nature.com/articles/s41467-026-70240-6